Google Cloud Vision analyzes images with machine learning models. It can classify pictures, detect faces and landmarks, extract text, and return web matches. Pricing and quotas change over time, so check the current Cloud Vision pricing page before you build on it.

Google Cloud Storage is Google's object storage service. You can use it to store files and address them by bucket and object name. As with Vision, review the current Cloud Storage pricing page before using it in production.

In this post, we build a small web application that lets a user pick an image, uploads it to Cloud Storage, sends it to Cloud Vision, and shows the detection result.

When you call Cloud Vision, you can either attach the image bytes directly or point the request at a Cloud Storage object. In this example, we upload the file to Storage first and then send a gs://bucket/object reference to Vision.

You could theoretically call these APIs straight from the browser, but that would require exposing Google credentials to every user. That is not acceptable for anything beyond throwaway demos.

A better architecture is to keep the Google credentials on the server. The web application talks to the back end, and the back end talks to Google Cloud.

That solves the credential problem, but it introduces unnecessary traffic. The image would travel from the browser to our server and from there to Cloud Storage.

The better option is to let the browser upload directly to Cloud Storage without giving end users Google accounts or opening the bucket to anonymous writes.

Cloud Storage solves this with signed URLs. In this example, the server creates a short-lived V4 signed URL for a single object, and the browser uses that URL to upload the file directly.

With signed URLs, the final architecture looks like this.

The client asks the server for a signed upload URL (1). The server generates it and sends it back (2). The browser uploads the image directly to Cloud Storage (3). After that, the browser tells the server which uploaded object to analyze (4), and the server sends the Vision request (5). The server maps the Vision response to a client-friendly DTO and returns it to the Ionic application (6, 7).

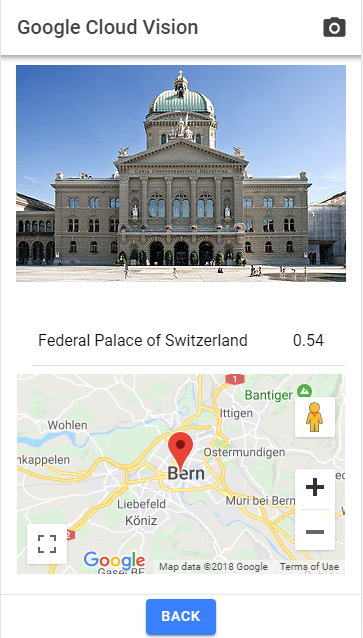

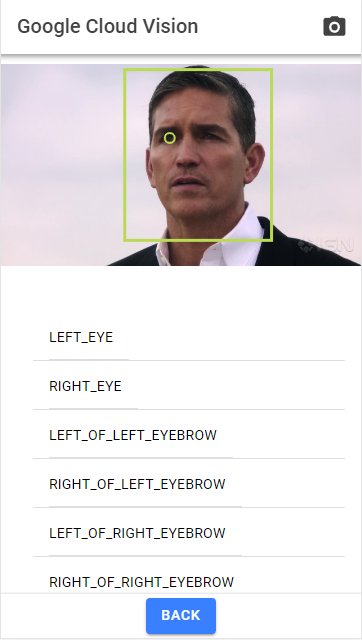

Two examples with a landmark and a face.

Source code for the Ionic web application and the Spring Boot server are hosted on GitHub: https://github.com/ralscha/blog/tree/master/googlevision

In the following sections, we look at the parts that make this flow work.

Setup ¶

Client ¶

The web application is written with the Ionic Framework and Angular. The server is a Spring Boot application created with Spring Initializr.

Server ¶

Google provides Java client libraries for both services. This example depends on the Cloud Storage client and the Cloud Vision client.

</properties>

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-webmvc</artifactId>

</dependency>

<dependency>

<groupId>com.google.cloud</groupId>

<artifactId>google-cloud-storage</artifactId>

<version>2.64.0</version>

</dependency>

<dependency>

<groupId>com.google.cloud</groupId>

<artifactId>google-cloud-vision</artifactId>

<version>3.86.0</version>

</dependency>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-configuration-processor</artifactId>

<optional>true</optional>

</dependency>

Open the Google Cloud console and create a project or select an existing one.

Enable Cloud Vision API and Maps JavaScript API. Then create an API key for Google Maps and restrict it with HTTP referrers so only your application URLs can use it.

For server-to-server authentication, prefer Application Default Credentials. When the application runs on Google Cloud, that usually means an attached service account or Workload Identity. For local development, you can set GOOGLE_APPLICATION_CREDENTIALS or point the application at a local key file with app.serviceAccountFile. The CORS origins are externalized in the same configuration class.

@ConfigurationProperties(prefix = "app")

@Component

public class AppConfig {

private String serviceAccountFile;

private List<String> origins;

public String getServiceAccountFile() {

return this.serviceAccountFile;

}

public void setServiceAccountFile(String serviceAccountFile) {

this.serviceAccountFile = serviceAccountFile;

}

public List<String> getOrigins() {

return this.origins;

}

public void setOrigins(List<String> origins) {

this.origins = origins;

}

The controller initializes both Google clients during startup. It creates a Storage instance for signed URLs and bucket access, and it prepares ImageAnnotatorSettings for creating a Vision client when a request comes in.

public VisionController(AppConfig appConfig) {

try {

GoogleCredentials credentials = createCredentials(appConfig);

StorageOptions options = StorageOptions.newBuilder().setCredentials(credentials)

.build();

this.storage = options.getService();

Bucket bucket = this.storage.get(BUCKET_NAME);

if (bucket == null) {

Cors cors = Cors.newBuilder().setMaxAgeSeconds(3600)

.setMethods(Collections.singleton(HttpMethod.PUT))

.setOrigins(appConfig.getOrigins().stream().map(Origin::of)

.collect(Collectors.toList()))

.setResponseHeaders(

Arrays.asList("Content-Type", "Access-Control-Allow-Origin"))

.build();

this.storage.create(BucketInfo.newBuilder(BUCKET_NAME)

.setCors(Collections.singleton(cors)).build());

}

this.imageAnnotatorSettings = ImageAnnotatorSettings.newBuilder()

.setCredentialsProvider(FixedCredentialsProvider.create(credentials)).build();

}

catch (IOException e) {

LoggerFactory.getLogger(VisionController.class)

.error("error constructing VisionController", e);

}

}

The same startup code creates the bucket if it does not exist yet and configures bucket CORS. That part is required because the upload happens directly from the browser to Cloud Storage. Since this demo only uploads files, allowing PUT is enough.

Cors cors = Cors.newBuilder().setMaxAgeSeconds(3600)

.setMethods(Collections.singleton(HttpMethod.PUT))

.setOrigins(appConfig.getOrigins().stream().map(Origin::of)

.collect(Collectors.toList()))

.setResponseHeaders(

Arrays.asList("Content-Type", "Access-Control-Allow-Origin"))

.build();

this.storage.create(BucketInfo.newBuilder(BUCKET_NAME)

.setCors(Collections.singleton(cors)).build());

Take a Picture ¶

The application targets browsers, especially on mobile devices, so the user needs a simple way to choose an image.

If you need deep native integration, Capacitor is the usual choice today. For this example, a plain web application is enough.

Modern mobile browsers handle this surprisingly well with a regular file input.

On desktop, input type="file" has always been the standard approach. On mobile, adding accept="image/*" is usually enough to let the browser offer image sources such as the photo library or the camera.

<input #fileSelector (change)="onFileChange($event)" accept="image/*" style="display: none;"

type="file">

To make the UI less clunky, the app hides the native file input and shows a camera button in the toolbar. Clicking that button calls click() on the input element.

<ion-buttons slot="end">

<ion-button (click)="clickFileSelector()">

<ion-icon name="camera-outline" slot="icon-only" />

</ion-button>

</ion-buttons>

clickFileSelector(): void {

this.fileInput().nativeElement.click();

}

After the user selects a file, the code receives a File object. The app could show that file directly in an img element, but a canvas is more useful here because Vision returns coordinates for detected features, and the canvas makes it easy to draw outlines on top of the image.

The file-change handler creates an object URL with URL.createObjectURL, loads it into an Image object, draws it to the canvas, and then starts the upload flow.

onFileChange(event: Event): void {

const input = event.target as HTMLInputElement;

const file = input.files?.item(0);

if (!file) {

return;

}

this.selectedFile = file;

const url = URL.createObjectURL(file);

this.image = new Image();

this.image.onload = async () => {

const image = this.image;

if (image === null) {

URL.revokeObjectURL(url);

return;

}

this.drawImageScaled(image);

const loading = await this.loadingController.create({

message: 'Processing...'

});

this.visionResult = null;

this.selectedFace = null;

this.detail = null;

this.markers = [];

await loading.present();

try {

await this.fetchSignUrl();

} finally {

URL.revokeObjectURL(url);

await loading.dismiss();

}

};

this.image.onerror = () => {

URL.revokeObjectURL(url);

};

this.image.src = url;

}

drawImageScaled() calculates the correct ratio and keeps the image inside the visible canvas area.

private drawImageScaled(img: HTMLImageElement): void {

const width = this.canvasContainer().nativeElement.clientWidth;

const height = this.canvasContainer().nativeElement.clientHeight;

const hRatio = width / img.width;

const vRatio = height / img.height;

this.ratio = Math.min(hRatio, vRatio);

if (this.ratio > 1) {

this.ratio = 1;

}

this.canvas().nativeElement.width = img.width * this.ratio;

this.canvas().nativeElement.height = img.height * this.ratio;

this.ctx.clearRect(0, 0, width, height);

this.ctx.drawImage(img, 0, 0, img.width, img.height,

0, 0, img.width * this.ratio, img.height * this.ratio);

}

The next step is fetchSignUrl(). The server already knows the bucket name and creates the object name itself with a UUID. The only value the browser has to send is the file's type. The client sends that information as JSON.

private async fetchSignUrl(): Promise<void> {

if (this.selectedFile === null) {

return Promise.reject('no file selected');

}

const response = await fetch(`${environment.serverURL}/signurl`, {

method: 'POST',

headers: {

'Content-Type': 'application/json'

},

body: JSON.stringify({

contentType: this.selectedFile.type || 'application/octet-stream'

})

});

if (!response.ok) {

throw new Error('Failed to fetch signed upload URL');

}

const {uuid, url} = await response.json();

await this.uploadToGoogleCloudStorage(url);

await this.initiateGoogleVision(uuid);

}

Sign URL ¶

The /signurl endpoint creates a UUID that becomes the object name in Cloud Storage.

Then it calls signUrl() on the Storage client and creates a V4 signed URL that is valid for three minutes. The URL is restricted to PUT, and the browser must send the matching Content-Type header.

If you want to know how V4 signing works internally, Google documents the process here: https://cloud.google.com/storage/docs/access-control/signing-urls-manually

@PostMapping(path = "/signurl", consumes = MediaType.APPLICATION_JSON_VALUE,

produces = MediaType.APPLICATION_JSON_VALUE)

public SignUrlResponse getSignUrl(@RequestBody SignUrlRequest request) {

String uuid = UUID.randomUUID().toString();

String contentType = StringUtils.hasText(request.contentType())

? request.contentType()

: MediaType.APPLICATION_OCTET_STREAM_VALUE;

String url = this.storage.signUrl(

BlobInfo.newBuilder(BUCKET_NAME, uuid).setContentType(contentType).build(),

3, TimeUnit.MINUTES, SignUrlOption.httpMethod(HttpMethod.PUT),

SignUrlOption.withContentType(), SignUrlOption.withV4Signature())

.toString();

return new SignUrlResponse(uuid, url);

}

The full implementation is here:

@PostMapping(path = "/signurl", consumes = MediaType.APPLICATION_JSON_VALUE,

produces = MediaType.APPLICATION_JSON_VALUE)

public SignUrlResponse getSignUrl(@RequestBody SignUrlRequest request) {

String uuid = UUID.randomUUID().toString();

String contentType = StringUtils.hasText(request.contentType())

? request.contentType()

: MediaType.APPLICATION_OCTET_STREAM_VALUE;

String url = this.storage.signUrl(

BlobInfo.newBuilder(BUCKET_NAME, uuid).setContentType(contentType).build(), 3,

TimeUnit.MINUTES, SignUrlOption.httpMethod(HttpMethod.PUT),

SignUrlOption.withContentType(), SignUrlOption.withV4Signature()).toString();

return new SignUrlResponse(uuid, url);

}

The UUID and the signed URL are returned in SignUrlResponse.

Upload to Google Cloud Storage ¶

Once the client has the signed URL, the upload itself is just a fetch() call with PUT. The important part is sending the same Content-Type value that was used when the URL was created.

The body of the request is simply the selected File object.

private async uploadToGoogleCloudStorage(signURL: string): Promise<void> {

if (this.selectedFile === null) {

return Promise.reject('no file selected');

}

const response = await fetch(signURL, {

method: 'PUT',

headers: {

'Content-Type': this.selectedFile.type || 'application/octet-stream',

},

body: this.selectedFile

});

if (!response.ok) {

throw new Error('Failed to upload file to Google Cloud Storage');

}

}

Google Cloud Vision ¶

After a successful upload, the client sends another JSON request to the Spring Boot back end with the UUID of the uploaded object.

private async initiateGoogleVision(uuid: string): Promise<void> {

const response = await fetch(`${environment.serverURL}/vision`, {

method: 'POST',

headers: {

'Content-Type': 'application/json'

},

body: JSON.stringify({uuid})

});

if (!response.ok) {

throw new Error('Failed to start Google Cloud Vision request');

}

this.visionResult = await response.json();

}

That UUID is the object name in Cloud Storage.

Cloud Vision accepts either inline image bytes or a Cloud Storage URI. In this example, the server builds a gs://bucket/object URI from the bucket constant and the UUID.

Before sending the request, the controller adds the features we want to detect: faces, landmarks, logos, labels, text, safe search, and web detection. In a real application, you would usually enable only the features you actually need.

@PostMapping(path = "/vision", consumes = MediaType.APPLICATION_JSON_VALUE,

produces = MediaType.APPLICATION_JSON_VALUE)

public VisionResult vision(@RequestBody VisionRequest request) throws IOException {

String uuid = request.uuid();

try (ImageAnnotatorClient vision = ImageAnnotatorClient

.create(this.imageAnnotatorSettings)) {

Image img = Image.newBuilder()

.setSource(

ImageSource.newBuilder().setImageUri("gs://" + BUCKET_NAME + "/" + uuid))

.build();

AnnotateImageRequest annotateRequest = AnnotateImageRequest.newBuilder()

.addFeatures(Feature.newBuilder().setType(Type.FACE_DETECTION).build())

.addFeatures(Feature.newBuilder().setType(Type.LANDMARK_DETECTION).build())

.addFeatures(Feature.newBuilder().setType(Type.LOGO_DETECTION).build())

.addFeatures(Feature.newBuilder().setType(Type.LABEL_DETECTION)

.setMaxResults(20).build())

.addFeatures(Feature.newBuilder().setType(Type.TEXT_DETECTION).build())

.addFeatures(Feature.newBuilder().setType(Type.SAFE_SEARCH_DETECTION).build())

.addFeatures(

Feature.newBuilder().setType(Type.WEB_DETECTION).setMaxResults(10).build())

.setImage(img).build();

// More Features:

// DOCUMENT_TEXT_DETECTION

// IMAGE_PROPERTIES

// CROP_HINTS

// PRODUCT_SEARCH

// OBJECT_LOCALIZATION

List<AnnotateImageRequest> requests = new ArrayList<>();

requests.add(annotateRequest);

// Performs label detection on the image file

BatchAnnotateImagesResponse response = vision.batchAnnotateImages(requests);

Pricing tiers and quota rules change, so always verify them on the current pricing page before you let users upload large numbers of images.

The Vision client library supports multiple images per request, but this demo sends one image at a time. For each image, the API returns an AnnotateImageResponse.

The controller maps that response into the VisionResult DTO that the client can render directly.

For brevity, I do not show all of that mapping code here, but you can inspect it on GitHub:

VisionResult result = new VisionResult();

AnnotateImageResponse resp = responses.get(0);

if (resp.hasError()) {

result.setError(resp.getError().getMessage());

return result;

}

if (resp.getLabelAnnotationsList() != null) {

List<Label> labels = new ArrayList<>();

for (EntityAnnotation ea : resp.getLabelAnnotationsList()) {

Label l = new Label();

l.setScore(ea.getScore());

l.setDescription(ea.getDescription());

labels.add(l);

}

result.setLabels(labels);

}

After the response is prepared, the controller deletes the uploaded object from Cloud Storage so the bucket does not accumulate temporary files.

finally {

if (StringUtils.hasText(uuid)) {

this.storage.delete(BlobId.of(BUCKET_NAME, uuid));

}

Back on the client, the Ionic application renders the grouped result lists below the picture. Labels and safe-search values are read-only. Tapping other entries draws rectangles or points on the canvas so the user can see where Vision found the feature.

Landmarks are the one special case. When you tap a detected landmark, the application opens a map marker at the returned coordinates.

Google Maps is integrated with the official @angular/google-maps package.

<div id="map">

@if (markers.length > 0) {

<google-map [options]="mapOptions">

@for (m of markers; track m) {

<map-marker [position]="{lat: m.lat, lng: m.lng}" />

}

</google-map>

}

</div>

This example shows a clean way to combine Ionic, Spring Boot, Cloud Storage, and Cloud Vision without routing large uploads through your own server. The browser only receives a short-lived upload URL, and the server keeps control over Google credentials and the Vision request.

If you have further questions or ideas for improvement, send me a message.